Ensuring Data Governance and Compliance in a Generative AI and Privacy-Focused World

- Karl Aguilar

- Nov 10, 2025

- 3 min read

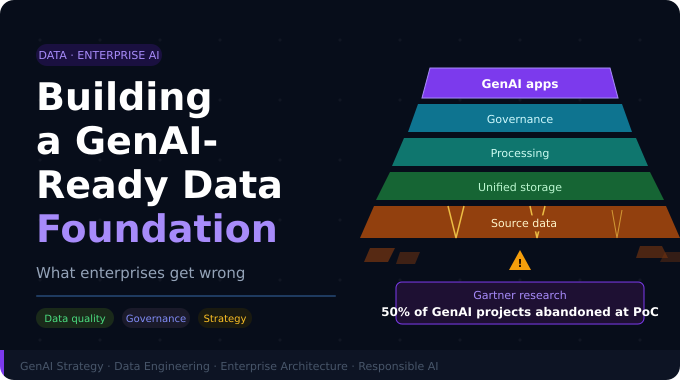

As artificial intelligence—especially Generative AI—becomes more embedded in how organizations operate, the stakes around data governance and compliance are growing fast.

AI is not just changing how we create, consume, and organize data—it’s introducing new risks that many businesses are not fully prepared to manage.

From content generation to decision automation, generative AI models can expose sensitive information, amplify bias, and weaken compliance postures if deployed without proper guardrails.

How Generative AI Changes the Risk Landscape

While the benefits of Generative AI are real, so are the risks. Understanding them is critical to deploying the technology safely and responsibly.

Key Risks:

Data Consumption at Scale: Generative AI models often ingest massive volumes of data—structured and unstructured—including regulated or proprietary information. This raises the risk of exposure, misuse, or accidental leaks.

Opaque Model Training: Many models are trained on data with unknown origins, making it difficult to verify licensing, provenance, or embedded bias.

Misinformation and Misrepresentation: AI-generated content can appear credible while being factually incorrect—posing legal and reputational risks.

Shadow AI and Data Leakage: Employees experimenting with consumer AI tools may unknowingly expose sensitive internal data to external systems, creating governance blind spots.

These risks are especially acute in regulated industries like finance, healthcare, and government, where explainability and compliance aren’t optional—they’re mandatory.

Governance Models Evolving to Meet AI Risk

To address these risks, organizations are adopting various governance models—each with its own strengths and limitations:

Data Governance

Traditionally used to manage static data assets, focusing on quality, access, security, and lifecycle management.

AI Governance

Applies principles of ethics, fairness, and accountability to the development and deployment of AI models.

AI-Driven Data Governance

An emerging model where AI tools automate policy enforcement, detect anomalies, and dynamically adapt controls—essential for governing other AI systems.

While these models aren’t mutually exclusive, organizations must decide how to operationalize them to align with their data maturity, industry requirements, and innovation goals.

Common Challenges in Practice

Even with the right frameworks in place, execution can be difficult. Common obstacles include:

Rapidly evolving AI behavior that makes static policies outdated

Conflicting internal agendas—business units want agility, while IT pushes for control

Shadow AI initiatives outside centralized oversight

Without a unified governance architecture, these gaps create friction, security risks, and compliance liabilities.

What a Mature AI Governance Architecture Should Include

Effective governance requires more than policies—it needs to be embedded into the AI lifecycle. The most forward-thinking organizations are implementing AI-native tools that deliver:

✅ Automatic anomaly detection and correction

✅ Continuous data quality monitoring and policy enforcement

✅ Behavior-based access controls that adapt to real usage

✅ Governance through natural language for transparency and accessibility

These components ensure governance is dynamic, contextual, and actionable—not just a checkbox exercise.

Best Practices for AI Privacy and Compliance

To ensure that governance scales with your AI ambitions, focus on:

Strong data governance policies (e.g., classification, access control, audit logging, and alignment with GDPR/CCPA)

Privacy by design principles (i.e., integrating privacy into the development lifecycle—not after deployment)

Transparency in data usage (e.g., clear policies, user communication, and model explainability)

Final Thought

Data governance and privacy compliance are no longer optional—they are business-critical in a world increasingly powered by Generative AI.

Regardless of which governance model you follow, the goal is the same:

To create a trustworthy, secure, and adaptive foundation for responsible AI.

Organizations that embed governance into the way AI is built, trained, and deployed won’t just reduce risk—they’ll unlock long-term competitive advantage.

How Pandoblox Helps

At Pandoblox, we help organizations embed governance, compliance, and AI-readiness across every layer of their IT and data environment.

Through our Integrated Service Desk and Data Service Desk, we provide:

Unified policy enforcement across infrastructure, data, and applications

AI-native tools for continuous compliance, access control, and quality monitoring

Strategic guidance for aligning AI adoption with evolving regulatory expectations

Learn more about how Pandoblox supports AI-ready data governance at pandoblox.com

Comments